File Upload With Metadata Using Spring Boot Rest Api Json

I have been wanting to showcase most of the new Couchbase'due south search features available in four.5 in one simple project. And at that place have been some interest recently about storing files or binaries in Couchbase. From a general, generic perspective, databases are non made to store files or binaries. Usually what you would do is store files in a binary shop and their associated metadata in the DB. The associated metadata will be the location of the file in the binary store and as much informations as possible extracted from the file.

So this is the projection I volition show yous today. It'due south a very simple Spring Kick app that let the user upload files, store them in a binary store, where associated text and metadata will be extracted from the file, and let yous search files based on those metadata and text. At the cease you'll exist able to search files by mimetype, epitome size, text content, basically whatever metadata you tin can extract from the file.

The Binary Store

This is a question we go often. You can certainly store binary data in a DB but files should exist in an appropriate binary shop. I decided to create a very simple implementation for this example. There is basically a folder on filesystem declared at runtime that will contained all the uploaded files. A SHA1 digest will be computed from the file's content and used as filename in that folder. Yous could obviously use other, more than advanced binary stores like Joyent'southward Manta or Amazon S3 for instance. Simply let'southward keep things simple for this post :) Here's a clarification of the services used.

SHA1Service

This is the simplest one, with ane method that bascially sends back a SHA-one digest based on the content of the file. To simplify the lawmaking even more than I am using Apache commons-codec:

| @ Service public class SHA1Service { public String getSha1Digest ( InputStream is ) { endeavor { return DigestUtils . sha1Hex ( is ) ; } catch ( IOException eastward ) { throw new RuntimeException ( east ) ; } } } |

DataExtractionService

This service is hither to to extract metadata and text from the uploaded files. At that place are a lot of unlike ways to do so. I have choosen to rely on ExifTool and Poppler.

ExifTool is a great command line tool that to read, write and edit file metadata. Information technology can as well output metadata directly in JSON. And it's of course not express to the Exif standard. It supports a wide diverseness of formats. Poppler is a PDF utility library that will allow me to extract the text content of a PDF. Equally these are control line tools, I will use plexus-utils to ease the CLI calls.

There are two methods. The outset one is extractMetadata and is responsible for ExifTool metadata extraction. Information technology'due south the equivalent of running the following command:

| exiftool - n - json somePDFFile |

The -n choice is here to make sure all numerics value will be given equally numbers and non Strings and -json to make certain the output is in JSON format. This tin can give y'all an output like this:

| ane two 3 4 5 6 seven eight 9 ten 11 12 thirteen 14 xv 16 17 18 19 20 21 | [ { "SourceFile" : "Desktop/someFile.pdf" , "ExifToolVersion" : x.11 , "FileName" : "someFile.pdf" , "Directory" : "Desktop" , "FileSize" : 20468 , "FileModifyDate" : "2016:03:29 13:50:29+02:00" , "FileAccessDate" : "2016:03:29 13:50:33+02:00" , "FileInodeChangeDate" : "2016:03:29 13:50:33+02:00" , "FilePermissions" : 644 , "FileType" : "PDF" , "FileTypeExtension" : "PDF" , "MIMEType" : "awarding/pdf" , "PDFVersion" : 1.4 , "Linearized" : fake , "ModifyDate" : "2016:03:29 02:42:32-07:00" , "CreateDate" : "2016:03:29 02:42:32-07:00" , "Producer" : "iText 2.1.6 by 1T3XT" , "PageCount" : 1 } ] |

At that place are some interesting informations like the mime-type, the size, the cosmos date and more. If the mime-type of the file is application/pdf then we tin can attempt to extract text from information technology with poppler, which is what the 2nd method of the service is doing. It's equivalent to the following CLI call:

| pdftotext - raw somePDFFile - |

This command sends the extracted text to the standard output. Which we can retrieve and put in a fulltext field in a JSON object. Full code of the service beneath:

| 1 two 3 4 v 6 7 viii 9 x 11 12 thirteen xiv xv 16 17 18 19 20 21 22 23 24 25 26 27 28 29 xxx 31 32 33 34 35 36 37 38 39 xl 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 threescore 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 | package org . couchbase . devex . service ; import coffee . io . File ; import org . codehaus . plexus . util . cli . CommandLineException ; import org . codehaus . plexus . util . cli . CommandLineUtils ; import org . codehaus . plexus . util . cli . Commandline ; import org . slf4j . Logger ; import org . slf4j . LoggerFactory ; import org . springframework . stereotype . Service ; import com . couchbase . client . coffee . document . json . JsonArray ; import com . couchbase . client . coffee . document . json . JsonObject ; @ Service public course DataExtractionService { private final Logger log = LoggerFactory . getLogger ( DataExtractionService . class ) ; public JsonObject extractMetadata ( File file ) { Cord command = "/usr/local/bin/exiftool" ; Cord [ ] arguments = { "-json" , "-n" , file . getAbsolutePath ( ) } ; Commandline commandline = new Commandline ( ) ; commandline . setExecutable ( control ) ; commandline . addArguments ( arguments ) ; CommandLineUtils . StringStreamConsumer err = new CommandLineUtils . StringStreamConsumer ( ) ; CommandLineUtils . StringStreamConsumer out = new CommandLineUtils . StringStreamConsumer ( ) ; try { CommandLineUtils . executeCommandLine ( commandline , out , err ) ; } catch ( CommandLineException e ) { throw new RuntimeException ( east ) ; } String output = out . getOutput ( ) ; if ( ! output . isEmpty ( ) ) { JsonArray arr = JsonArray . fromJson ( output ) ; return arr . getObject ( 0 ) ; } String fault = err . getOutput ( ) ; if ( ! error . isEmpty ( ) ) { log . error ( error ) ; } return null ; } public String extractText ( File file ) { String command = "/usr/local/bin/pdftotext" ; Cord [ ] arguments = { "-raw" , file . getAbsolutePath ( ) , "-" } ; Commandline commandline = new Commandline ( ) ; commandline . setExecutable ( control ) ; commandline . addArguments ( arguments ) ; CommandLineUtils . StringStreamConsumer err = new CommandLineUtils . StringStreamConsumer ( ) ; CommandLineUtils . StringStreamConsumer out = new CommandLineUtils . StringStreamConsumer ( ) ; try { CommandLineUtils . executeCommandLine ( commandline , out , err ) ; } catch ( CommandLineException due east ) { throw new RuntimeException ( e ) ; } String output = out . getOutput ( ) ; if ( ! output . isEmpty ( ) ) { return output ; } String fault = err . getOutput ( ) ; if ( ! error . isEmpty ( ) ) { log . error ( error ) ; } return nil ; } } |

Adequately Simple stuff as yous tin can see one time you lot use plexus-utils.

BinaryStoreService

This service is reponsible for running the data extraction and storing files, deleting files or retrieving files. Permit'south get-go with the storing role. Everything happens in the storeFile method. First thing to practice is retrieve the digest of the file, than write it in the binary store folder declared in the configuration. Once the file is written the data extraction service is called to recollect Metadata every bit a JsonObject. Then binary store location, certificate type, assimilate and filename are added to that JSON object. If the uploaded file is a PDF, the data extraction service is called again to think the text content and shop it in a fulltext field. Then a JsonDocument is created with the assimilate as primal and the JsonObject equally content.

| i ii 3 iv v 6 7 viii 9 x 11 12 13 xiv xv 16 17 18 19 xx 21 22 23 24 25 26 27 28 | public void storeFile ( String proper noun , MultipartFile uploadedFile ) { if ( ! uploadedFile . isEmpty ( ) ) { endeavor { String assimilate = sha1Service . getSha1Digest ( uploadedFile . getInputStream ( ) ) ; File file2 = new File ( configuration . getBinaryStoreRoot ( ) + File . separator + digest ) ; BufferedOutputStream stream = new BufferedOutputStream ( new FileOutputStream ( file2 ) ) ; FileCopyUtils . re-create ( uploadedFile . getInputStream ( ) , stream ) ; stream . close ( ) ; JsonObject metadata = dataExtractionService . extractMetadata ( file2 ) ; metadata . put ( StoredFileDocument . BINARY_STORE_DIGEST_PROPERTY , digest ) ; metadata . put ( "type" , StoredFileDocument . COUCHBASE_STORED_FILE_DOCUMENT_TYPE ) ; metadata . put ( StoredFileDocument . BINARY_STORE_LOCATION_PROPERTY , name ) ; metadata . put ( StoredFileDocument . BINARY_STORE_FILENAME_PROPERTY , uploadedFile . getOriginalFilename ( ) ) ; String mimeType = metadata . getString ( StoredFileDocument . BINARY_STORE_METADATA_MIMETYPE_PROPERTY ) ; if ( MIME_TYPE_PDF . equals ( mimeType ) ) { String fulltextContent = dataExtractionService . extractText ( file2 ) ; metadata . put ( StoredFileDocument . BINARY_STORE_METADATA_FULLTEXT_PROPERTY , fulltextContent ) ; } JsonDocument doctor = JsonDocument . create ( digest , metadata ) ; bucket . upsert ( md ) ; } catch ( Exception e ) { throw new RuntimeException ( e ) ; } } else { throw new IllegalArgumentException ( "File empty" ) ; } } |

Reading or deleting should exist pretty straight forrard to sympathize now:

| 1 2 3 4 5 6 7 eight 9 10 xi 12 xiii 14 15 xvi 17 18 nineteen 20 21 | public StoredFile findFile ( String digest ) { File f = new File ( configuration . getBinaryStoreRoot ( ) + File . separator + assimilate ) ; if ( ! f . exists ( ) ) { return zilch ; } JsonDocument doc = bucket . get ( digest ) ; if ( doc == zero ) render null ; StoredFileDocument fileDoc = new StoredFileDocument ( doc ) ; return new StoredFile ( f , fileDoc ) ; } public void deleteFile ( String assimilate ) { File f = new File ( configuration . getBinaryStoreRoot ( ) + File . separator + assimilate ) ; if ( ! f . exists ( ) ) { throw new IllegalArgumentException ( "Tin't delete file that does not be" ) ; } f . delete ( ) ; saucepan . remove ( assimilate ) ; } |

Please keep in mind that this is a very naïve inplementation!

Indexing and Searching Files

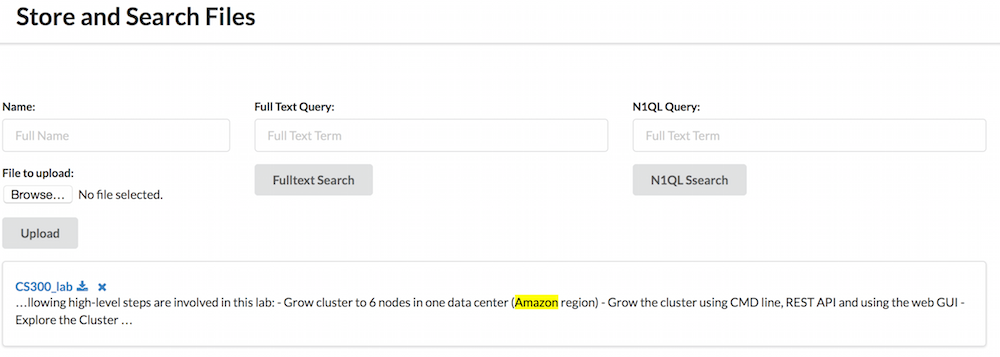

In one case you have uploaded files, you want to think them. The kickoff very basic fashion of doing and then would exist to display the full list of files. Then yous could use N1QL to search them based on their metadatas or FTS to search them based on their content.

The Search Service

getFiles method simply runs the post-obit query: SELECT binaryStoreLocation, binaryStoreDigest FROMdefaultWHERE type= 'file'. This sends the full listing of uploaded files with their digest and binary store location. Notice the consistency selection set to statement_plus. It'southward a document application so I prefer strong consistency.

Next you have searchN1QLFiles that runs a basic N1QL query with an boosted WHERE clause. So the default is the same query as above with an additional WHERE part. There is no tighter integration so far. Nosotros could have a fancy search form assuasive the user to search for files based on their mime-types, size or any other fields given past ExifTool.

And finally you accept searchFulltextFiles that takes a String as input and apply it in a Match query. So the result is sent back with fragments of text where the term was establish. This fragment allow highlighting of the term in context. I also ask for the binaryStoreDigest and binaryStoreLocation fields. They are the ane used to display the results to the user.

| 1 ii 3 4 5 6 vii eight ix 10 11 12 13 14 xv 16 17 18 xix 20 21 22 23 24 25 26 27 28 29 xxx 31 32 33 34 | public List & lt ; Map & lt ; String , Object & gt ; & gt ; getFiles ( ) { N1qlQuery query = N1qlQuery . simple ( "SELECT binaryStoreLocation, binaryStoreDigest FROM `default` WHERE blazon= 'file'" ) ; query . params ( ) . consistency ( ScanConsistency . STATEMENT_PLUS ) ; N1qlQueryResult res = saucepan . query ( query ) ; List & lt ; Map & lt ; String , Object & gt ; & gt ; filenames = res . allRows ( ) . stream ( ) . map ( row - & gt ; row . value ( ) . toMap ( ) ) . collect ( Collectors . toList ( ) ) ; render filenames ; } public Listing & lt ; Map & lt ; Cord , Object & gt ; & gt ; searchN1QLFiles ( String whereClause ) { N1qlQuery query = N1qlQuery . simple ( "SELECT binaryStoreLocation, binaryStoreDigest FROM `default` WHERE blazon= 'file' " + whereClause ) ; query . params ( ) . consistency ( ScanConsistency . STATEMENT_PLUS ) ; N1qlQueryResult res = bucket . query ( query ) ; List & lt ; Map & lt ; String , Object & gt ; & gt ; filenames = res . allRows ( ) . stream ( ) . map ( row - & gt ; row . value ( ) . toMap ( ) ) . collect ( Collectors . toList ( ) ) ; render filenames ; } public List & lt ; Map & lt ; String , Object & gt ; & gt ; searchFulltextFiles ( String term ) { SearchQuery ftq = MatchQuery . on ( "file_fulltext" ) . match ( term ) . fields ( "binaryStoreDigest" , "binaryStoreLocation" ) . build ( ) ; SearchQueryResult result = saucepan . query ( ftq ) ; Listing & lt ; Map & lt ; String , Object & gt ; & gt ; filenames = result . hits ( ) . stream ( ) . map ( row - & gt ; { Map & lt ; String , Object & gt ; m = new HashMap & lt ; String , Object & gt ; ( ) ; m . put ( "binaryStoreDigest" , row . fields ( ) . get ( "binaryStoreDigest" ) ) ; thou . put ( "binaryStoreLocation" , row . fields ( ) . become ( "binaryStoreLocation" ) ) ; m . put ( "fragment" , row . fragments ( ) . get ( "fulltext" ) ) ; render m ; } ) . collect ( Collectors . toList ( ) ) ; return filenames ; } |

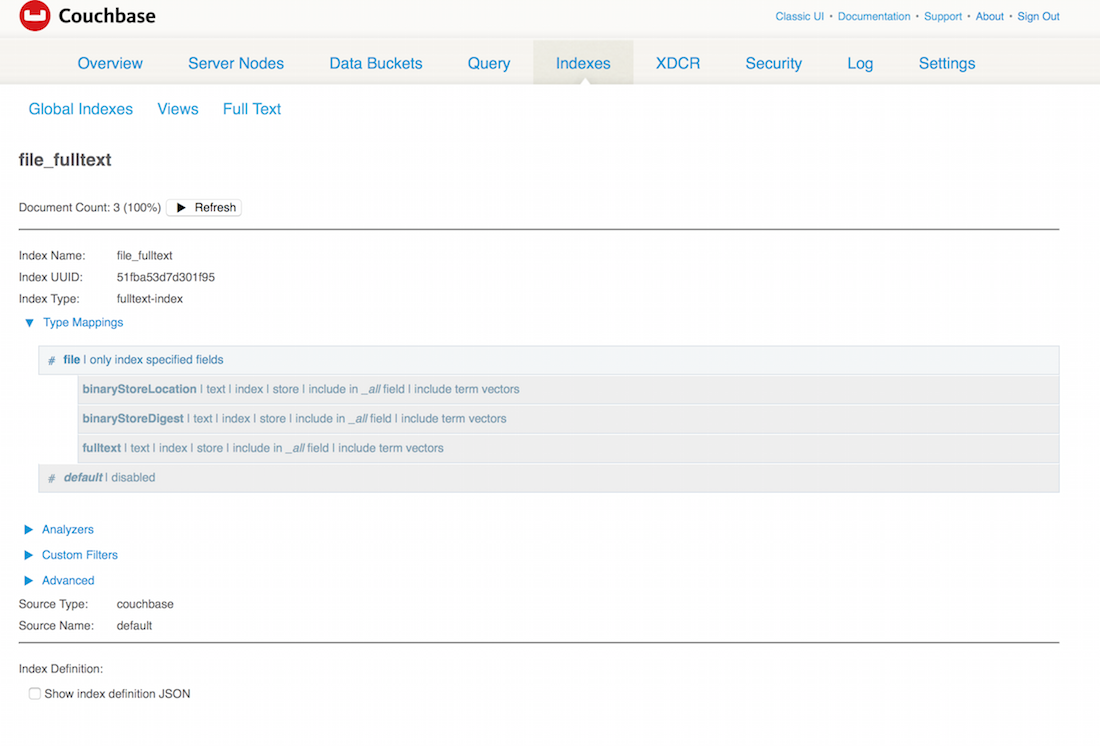

The TermQuery.on method defines which alphabetize I am querying. Here it'due south set to 'file_fulltext'. It means I take created a total text index with that proper noun:

Putting Everything Together

Configuration

A quick word well-nigh configuration first. The only thing configurable so far is the binary store path. Since I am using Spring Boot, I just need the following code:

| one 2 3 4 5 6 seven 8 ix 10 11 12 13 14 15 16 17 | bundle org . couchbase . devex ; import org . springframework . beans . manufacturing plant . annotation . Value ; import org . springframework . context . notation . Configuration ; @ Configuration public class BinaryStoreConfiguration { @ Value ( "${binaryStore.root:upload-dir}" ) private String binaryStoreRoot ; public String getBinaryStoreRoot ( ) { return binaryStoreRoot ; } } |

With that I can just add binaryStore.root=/Users/ldoguin/binaryStore to my application.properties file. I also want to permit upload of 512MB file max. Likewise, to leverage Bound Kicking Couchbase autoconfig, I need to add together the address of my Couchbase Server. In the end my application.properties looks like this:

| binaryStore . root = / Users / ldoguin / binaryStore multipart . maxFileSize : 512MB multipart . maxRequestSize : 512MB spring . couchbase . bootstrap - hosts = localhost |

Using Spring Boot autoconfig simply requires to have jump-boot-starter-parent equally parent and Couchbase in the classpath. So information technology's only a matter of adding a Couchbase java-customer dependency. I am specifying the 2.ii.four version here because it defaults to 2.2.3 and FTS is but in two.2.4. You can take a await at the total pom file on Github. Kudos to Stéphane Nicoll from Pivotal and Simon Baslé from Couchbase for this wonderful Bound integration.

Controller

Since this application is very simple, I have put everything nether the same controller. The near basic endpoint is /files. It display the list of files already uploaded. Just 1 call to the searchService, put the result in the folio Model and so render the folio.

| @ RequestMapping ( method = RequestMethod . GET , value = "/files" ) public Cord provideUploadInfo ( Model model ) { List & lt ; Map & lt ; String , Object & gt ; & gt ; files = searchService . getFiles ( ) ; model . addAttribute ( "files" , files ) ; render "uploadForm" ; } |

I utilize Thymeleaf for rendering and Semantic UI as CSS framework. You lot can take a look at the template used here. It's the only template used in the application.

In one case you have a listing of files, you lot can download or delete them. Both method are calling the binary store service method, the residue of the lawmaking is archetype Leap MVC:

| ane two 3 4 5 6 7 8 9 ten 11 12 13 xiv fifteen 16 17 18 19 20 21 22 23 24 25 26 | @ RequestMapping ( method = RequestMethod . GET , value = "/download/{digest}" ) public String download ( @ PathVariable String assimilate , RedirectAttributes redirectAttributes , HttpServletResponse response ) throws IOException { StoredFile sf = binaryStoreService . findFile ( digest ) ; if ( sf == zip ) { redirectAttributes . addFlashAttribute ( "message" , "This file does not exist." ) ; render "redirect:/files" ; } response . setContentType ( sf . getStoredFileDocument ( ) . getMimeType ( ) ) ; response . setHeader ( "Content-Disposition" , String . format ( "inline; filename=" " + sf.getStoredFileDocument().getBinaryStoreFilename() + " "" ) ) ; response . setContentLength ( sf . getStoredFileDocument ( ) . getSize ( ) ) ; InputStream inputStream = new BufferedInputStream ( new FileInputStream ( sf . getFile ( ) ) ) ; FileCopyUtils . copy ( inputStream , response . getOutputStream ( ) ) ; return null ; } @ RequestMapping ( method = RequestMethod . Get , value = "/delete/{assimilate}" ) public Cord delete ( @ PathVariable Cord assimilate , RedirectAttributes redirectAttributes , HttpServletResponse response ) { binaryStoreService . deleteFile ( digest ) ; redirectAttributes . addFlashAttribute ( "message" , "File deleted successfuly." ) ; return "redirect:/files" ; } |

Obviously you'll want to upload some files likewise. Information technology's a simple Multipart POST. The binary shop service is called, persist the file and excerpt the appropriate information, then redirect to the /files endpoint.

| @ RequestMapping ( method = RequestMethod . POST , value = "/upload" ) public Cord handleFileUpload ( @ RequestParam ( "name" ) String name , @ RequestParam ( "file" ) MultipartFile file , RedirectAttributes redirectAttributes ) { if ( proper noun . isEmpty ( ) ) { redirectAttributes . addFlashAttribute ( "message" , "Name can't be empty!" ) ; return "redirect:/files" ; } binaryStoreService . storeFile ( name , file ) ; redirectAttributes . addFlashAttribute ( "message" , "Y'all successfully uploaded " + proper noun + "!" ) ; return "redirect:/files" ; } |

The terminal two methods are used for the search. They only call the search service and add together the result to the folio Model and return it.

| @ RequestMapping ( method = RequestMethod . POST , value = "/fulltext" ) public Cord fulltextQuery ( @ ModelAttribute ( value = "name" ) Cord query , Model model ) throws IOException { Listing & lt ; Map & lt ; String , Object & gt ; & gt ; files = searchService . searchFulltextFiles ( query ) ; model . addAttribute ( "files" , files ) ; return "uploadForm" ; } @ RequestMapping ( method = RequestMethod . POST , value = "/n1ql" ) public String n1qlQuery ( @ ModelAttribute ( value = "name" ) String query , Model model ) throws IOException { List & lt ; Map & lt ; String , Object & gt ; & gt ; files = searchService . searchN1QLFiles ( query ) ; model . addAttribute ( "files" , files ) ; return "uploadForm" ; } |

And this is roughly all you need to store, index and search files with Couchbase and Spring Boot. It is a simple app and at that place are many, many other things yous could do to ameliorate information technology, starting by a proper search form exposing ExifTool extracted fields. Multiple file uploads and drag and drop would be a overnice plus. What else would you lot similar to see? Let usa know in the comments bellow!

williamsonbroleyed1964.blogspot.com

Source: https://blog.couchbase.com/storing-indexing-searching-files-couchbase-spring-boot/

0 Response to "File Upload With Metadata Using Spring Boot Rest Api Json"

Post a Comment